Vector stores

This conceptual overview focuses on text-based indexing and retrieval for simplicity. However, embedding models can be multi-modal and vectorstores can be used to store and retrieve a variety of data types beyond text.

Overview

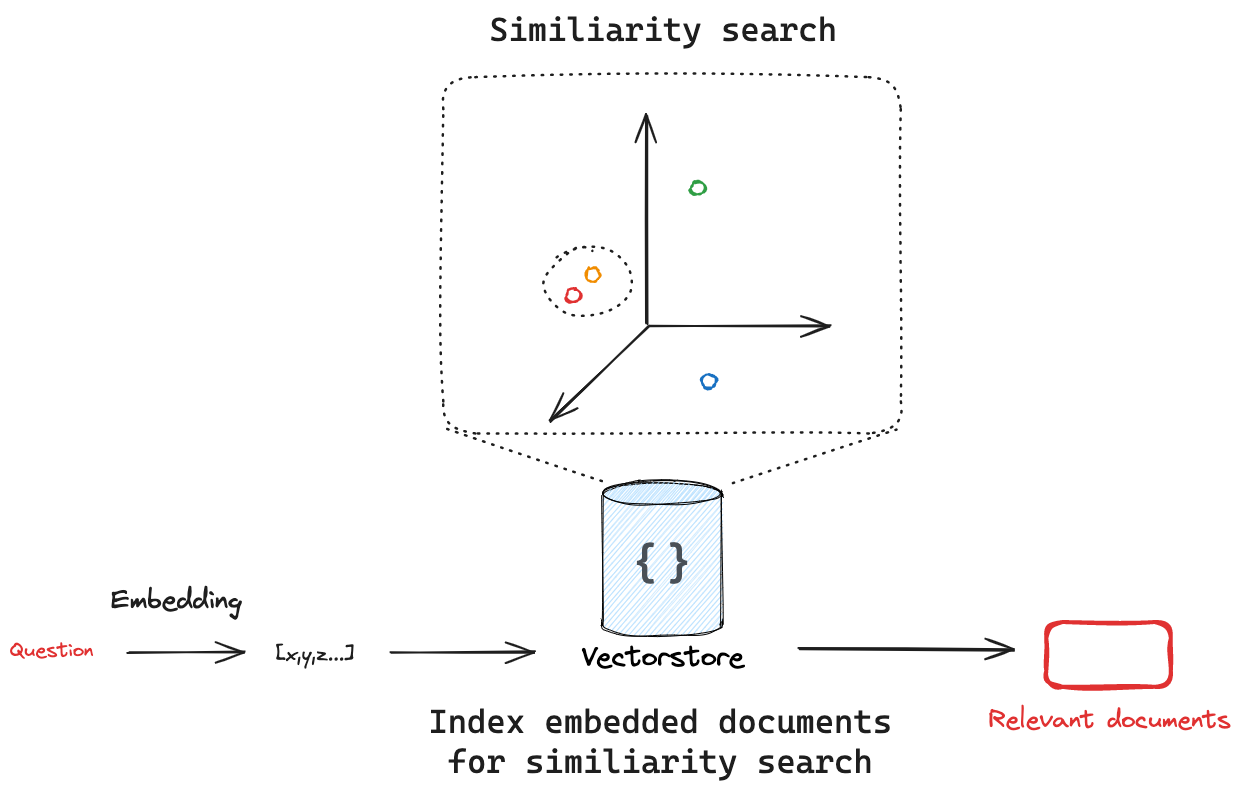

Vectorstores are a powerful and efficient way to index and retrieve unstructured data. They leverage vector embeddings, which are numerical representations of unstructured data that capture semantic meaning. At their core, vectorstores utilize specialized data structures called vector indices. These indices are designed to perform efficient similarity searches over embedding vectors, allowing for rapid retrieval of relevant information based on semantic similarity rather than exact keyword matches.

Key concept

There are many different types of vectorstores. LangChain provides a universal interface for working with them, providing standard methods for common operations.

Adding documents

Using Pinecone as an example, we initialize a vectorstore with the embedding model we want to use:

from pinecone import Pinecone

from langchain_openai import OpenAIEmbeddings

from langchain_pinecone import PineconeVectorStore

# Initialize Pinecone

pc = Pinecone(api_key=os.environ["PINECONE_API_KEY"])

# Initialize with an embedding model

vector_store = PineconeVectorStore(index=pc.Index(index_name), embedding=OpenAIEmbeddings())

Given a vectorstore, we need the ability to add documents to it.

The add_texts and add_documents methods can be used to add texts (strings) and documents (LangChain Document objects) to a vectorstore, respectively.

As an example, we can create a list of Documents.

Document objects all have page_content and metadata attributes, making them a universal way to store unstructured text and associated metadata.

from langchain_core.documents import Document

document_1 = Document(

page_content="I had chocalate chip pancakes and scrambled eggs for breakfast this morning.",

metadata={"source": "tweet"},

)

document_2 = Document(

page_content="The weather forecast for tomorrow is cloudy and overcast, with a high of 62 degrees.",

metadata={"source": "news"},

)

documents = [document_1, document_2]

When we use the add_documents method to add the documents to the vectorstore, the vectorstore will use the provided embedding model to create an embedding of each document.

What happens if we add the same document twice?

Many vectorstores support upsert functionality, which combines the functionality of inserting and updating records.

To use this, we simply supply a unique identifier for each document when we add it to the vectorstore using add_documents or add_texts.

If the record doesn't exist, it inserts a new record.

If the record already exists, it updates the existing record.

# Given a list of documents and a vector store

uuids = [str(uuid4()) for _ in range(len(documents))]

vector_store.add_documents(documents=documents, ids=uuids)

- See the full list of LangChain vectorstore integrations.

- See Pinecone's documentation on the

upsertmethod.

Search

Vectorstores embed and store the documents that added. If we pass in a query, the vectorstore will embed the query, perform a similarity search over the embedded documents, and return the most similar ones. This captures two important concepts: first, there needs to be a way to measure the similarity between the query and any embedded document. Second, there needs to be an algorithm to efficiently perform this similarity search across all embedded documents.

Similarity metrics

A critical advantage of embeddings vectors is they can be compared using many simple mathematical operations:

- Cosine Similarity: Measures the cosine of the angle between two vectors.

- Euclidean Distance: Measures the straight-line distance between two points.

- Dot Product: Measures the projection of one vector onto another.

The choice of similarity metric can sometimes be selected when initializing the vectorstore. As an example, Pinecone allows the user to select the similarity metric on index creation.

pc.create_index(

name=index_name,

dimension=3072,

metric="cosine",

)

- See this documentation from Google on similarity metrics to consider with embeddings.

- See Pinecone's blog post on similarity metrics.

- See OpenAI's FAQ on what similarity metric to use with OpenAI embeddings.

Similarity Search

Given a similarity metric to measure the distance between the embedded query and any embedded document, we need an algorithm to efficiently search over all the embedded documents to find the most similar ones.

There are various ways to do this. As an example, many vectorstores implement HNSW (Hierarchical Navigable Small World), a graph-based index structure that allows for efficient similarity search.

Regardless of the search algorithm used under the hood, the LangChain vectorstore interface has a similarity_search method for all integrations.

This will take the search query, create an embedding, find similar documents, and return them as a list of Documents.

query = "my query"

docs = vectorstore.similarity_search(query)

Many vectorstores support search parameters to be passed with the similarity_search method. See the documentation for the specific vectorstore you are using to see what parameters are supported.

As an example Pinecone several parameters that are important general concepts:

Many vectorstores support the k, which controls the number of Documents to return, and filter, which allows for filtering documents by metadata.

query (str) – Text to look up documents similar to.k (int) – Number of Documents to return. Defaults to 4.filter (dict | None) – Dictionary of argument(s) to filter on metadata

- See the how-to guide for more details on how to use the

similarity_searchmethod. - See the integrations page for more details on arguments that can be passed in to the

similarity_searchmethod for specific vectorstores.

Metadata filtering

While vectorstore implement a search algorithm to efficiently search over all the embedded documents to find the most similar ones, many also support filtering on metadata. This allows structured filters to reduce the size of the similarity search space. These two concepts work well together:

- Semantic search: Query the unstructured data directly, often using via embedding or keyword similarity.

- Metadata search: Apply structured query to the metadata, filering specific documents.

Vectorstore support for metadata filtering is typically dependent on the underlying vector store implementation.

Here is example usage with Pinecone, showing that we filter for all documents that have the metadata key source with value tweet.

results = vectorstore.similarity_search(

"LangChain provides abstractions to make working with LLMs easy",

k=2,

filter={"source": "tweet"},

)

- See Pinecone's documentation on filtering with metadata.

- See the list of LangChain vectorstore integrations that support metadata filtering.

Advanced search and retrieval techniques

While algorithms like HNSW provide the foundation for efficient similarity search in many cases, additional techniques can be employed to improve search quality and diversity.

For example, maximal marginal relevance is a re-ranking algorithm used to diversify search results, which is applied after the initial similarity search to ensure a more diverse set of results.

As a second example, some vector stores offer built-in hybrid-search to combine keyword and semantic similarity search, which marries the benefits of both approaches.

At the moment, there is no unified way to perform hybrid search using LangChain vectorstores, but it is generally exposed as a keyword argument that is passed in with similarity_search.

See this how-to guide on hybrid search for more details.

| Name | When to use | Description |

|---|---|---|

| Hybrid search | When combining keyword-based and semantic similarity. | Hybrid search combines keyword and semantic similarity, marrying the benefits of both approaches. Paper. |

| Maximal Marginal Relevance (MMR) | When needing to diversify search results. | MMR attempts to diversify the results of a search to avoid returning similar and redundant documents. |